Blogs

leadership insights, best practices & advice to build better infrastructure for

your secure, high performing and scalable applications

How Startups Can Leverage Cloud Computing for Growth: A Phase-by-Phase Guide

Cloud Computing and the Phases in Life of a Startup Innovation and startups are usually

Release Management in Multi-Cloud Environments: Navigating Complexity for Startup Success

When starting a successful startup, it can take time to select the right provider. “All workloads

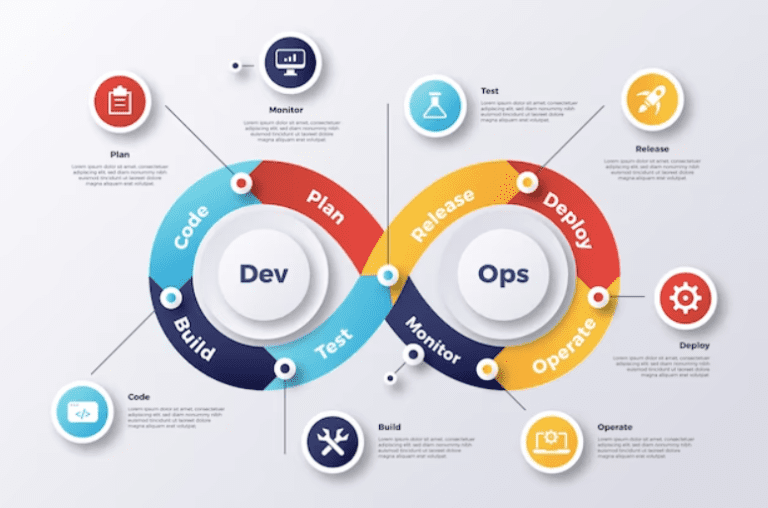

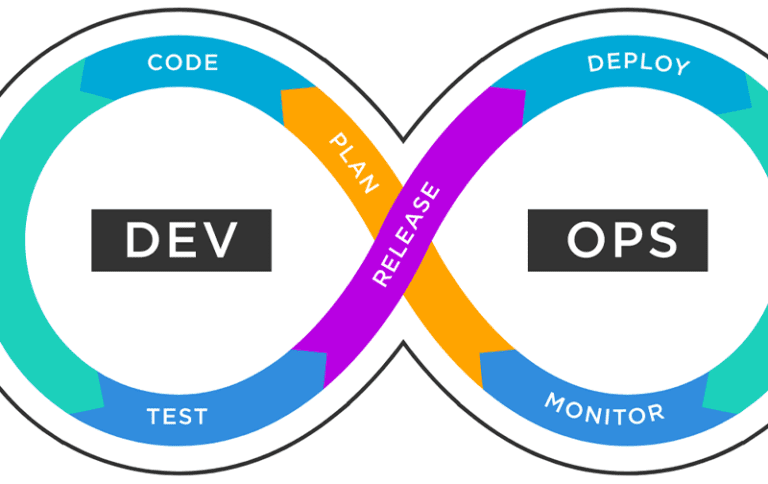

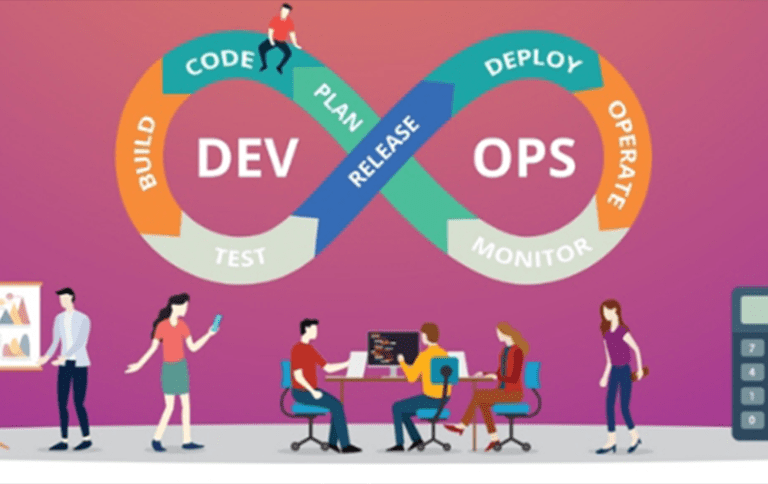

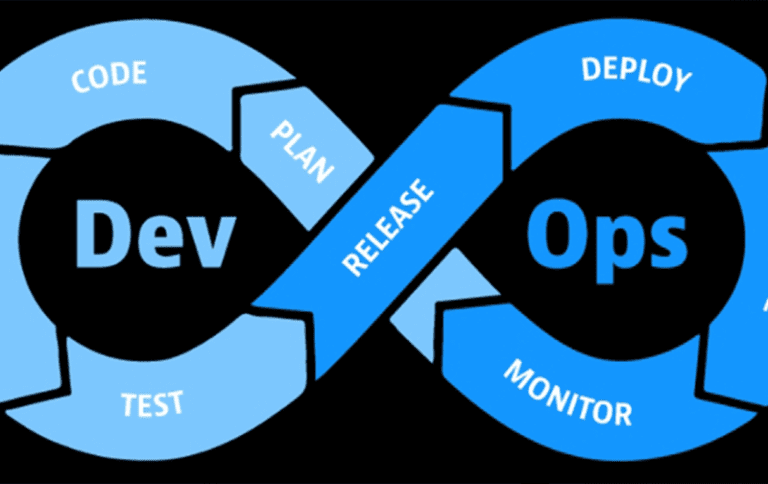

What Role does DevOps play in Enabling Efficient Release Management for Startups?

In the dynamic world of startups, effective release management plays a pivotal role in orchestrating the

Why Release Management Is So Challenging In DevOps?

Release Management for Startups Introduction DevOps release management is now a vital part of software

Power of Kubernetes and Container Orchestration

Welcome back to our ongoing exploration! Today we’ll be exploring containers and container orchestration with Kubernetes.

Launching Your Kubernetes Cluster with Deployment: Deployment in K8s for DevOps

In this article, we’ll be exploring deployment in Kubernetes (k8s). The first step will be to

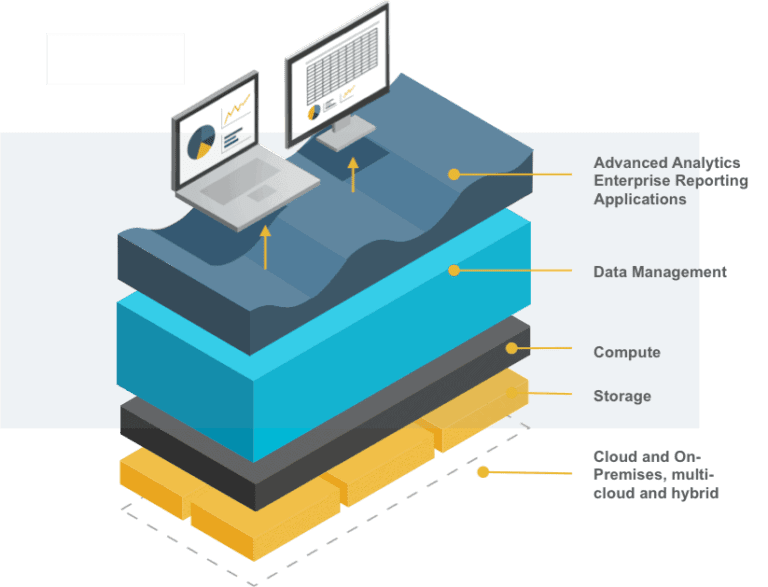

Well-Architected Framework Review

In today’s rapidly evolving technological landscape, mastering the Well-Architected Framework is not just crucial—it’s the compass

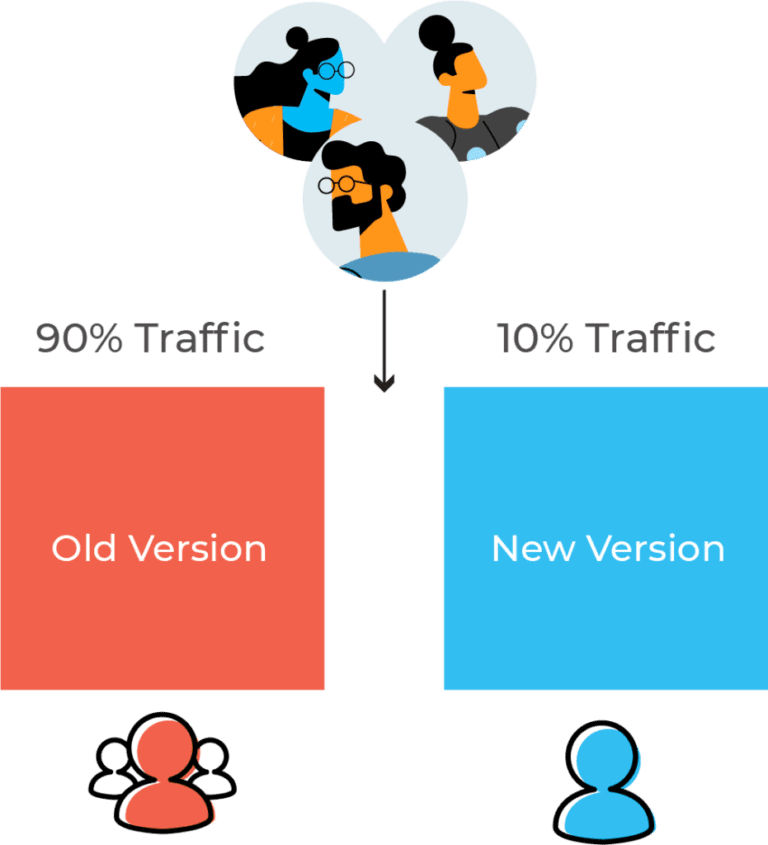

Application Deployment & The Various Deployment Types Explained

What is Deployment in simple words? Deployment is a process that enables you to retrieve you

Automating Release Processes: Increasing Efficiency and Reducing Errors for Startups

In this ever-evolving tech landscape delivering high-quality software efficiently and reliably is crucial. Startups introduce new

Unleash the Power of AWS DevOps Tools: Supercharge Your Software Delivery

AWS DevOps Tools are essential for modern software delivery, enabling streamlined collaboration, automated workflows, and scalable

Overcoming Common Challenges in DevOps 2023: Embracing DevOps as a Service

DevOps is increasingly popular for software creation and management. DevOps as a service deliver goods faster,

Understanding Why The Release Is So Important?

In an era of tech-centric products, it becomes crucial to be on top of the game.

Serverless Security: Best Practices

Serverless Security and Security Computing Many cloud providers now offer secure cloud services using special security

What Are The Expected Benefits Of Building Automation in DevOps?

DevOps is a unique way of working that combines development and operations. It has had a

How to Manage Containers in DevOps?

DevOps Automation and Containerization in DevOps DevOps Automation refers to the practice of using automated tools

Exploring The Power of Serverless Architecture in Cloud Computing

Lately, there’s been a lot of talk about “serverless computing” in the computer industry. It’s a

More details on Cloud Management Platforms – Gartner and the Magic Quadrant

In today’s fast-paced tech world, cloud computing has become an integral part of the business landscape.

How to Containerize Applications and Deploy on Kubernetes

Containerization is a revolutionary approach to application deployment. It allows developers to pack an application with

10 Things Startups Should Look For While Launching a Product on Cloud

Build Automation Software and Cloud Platform In recent years there has been a rise in startup

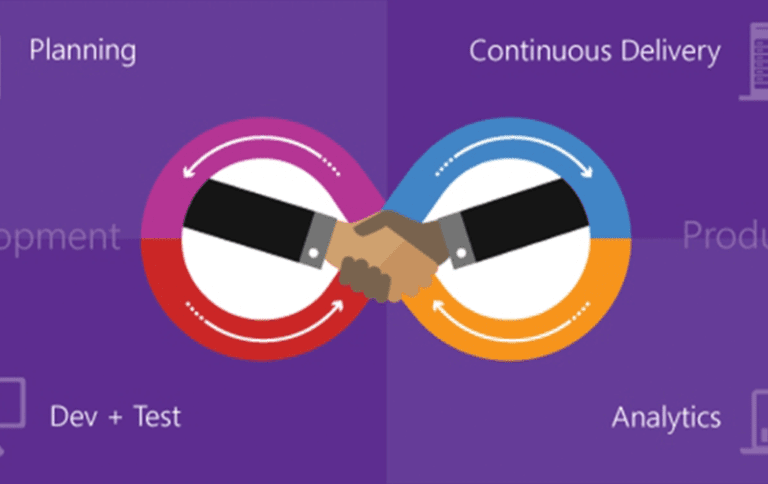

How do Continuous Integration and Continuous Deployment work ?

How do Continuous Integration and Continuous Deployment work? Let us first get a brief on

Cloud Computing And Business Continuity: Why Startups And SMEs Need A Disasters Recovery Plan

A cloud disaster recovery plan is vital for Startups and SMEs, as it safeguards critical data,

How to Optimize Your Cloud Costs as a Developer

Efficient cloud storage pricing is crucial for businesses, enabling cost savings and scalability, and ensuring seamless

Cloud Computing and Innovation: How Startups and SMEs Can Leverage the Cloud for Competitive Advantage

In today’s fast-paced digital landscape, startups need a cloud computing platform to gain a competitive edge.

Building a Serverless Architecture in the Cloud: A Step-by-Step Guide for Developers

The concept of Serverless Architecture is becoming popular among businesses of all sizes. In traditional practices,

Securing Your Cloud Applications: Best Practices for Developers

Securing cloud applications is paramount in today’s digital landscape. It is important to protect sensitive data,

Deploying Microservices in the Cloud: Best Practices for Developers

Adopting a Cloud Platform Solution refers to implementing a comprehensive infrastructure and service framework that leverages

Developing Cloud-Native Applications: Key Principles and Techniques

The tech world is changing faster than ever and businesses need applications that can adapt to

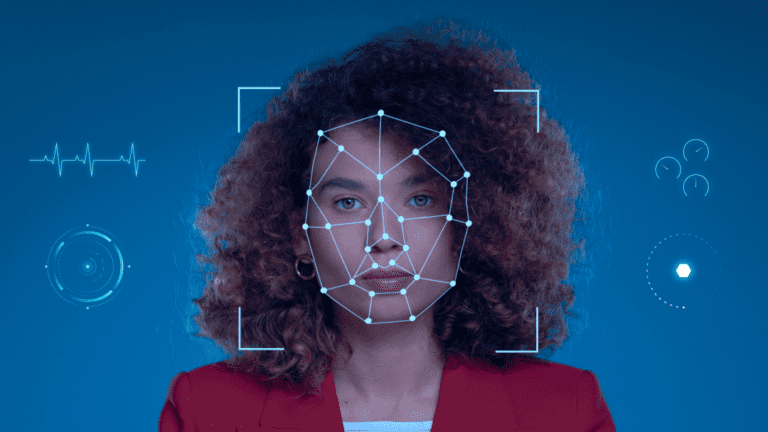

Real-time Computer Vision for Autonomous Systems

Real-time computer vision technology enables instant processing and analysis of visual data, allowing for applications in

Computer Vision and Machine Learning For Healthcare Innovation

Computer vision is transforming healthcare by enabling advanced imaging analysis to aid in diagnosis, treatment, and

Computer Vision In Robotics: Enhancing Automation In AI

As we move towards the future, robots have a growing potential to take in a broader

Innovations In Computer Vision For Improved AI

Computer vision is a branch of AI(Artificial Intelligence) that deals with visual data. The role of

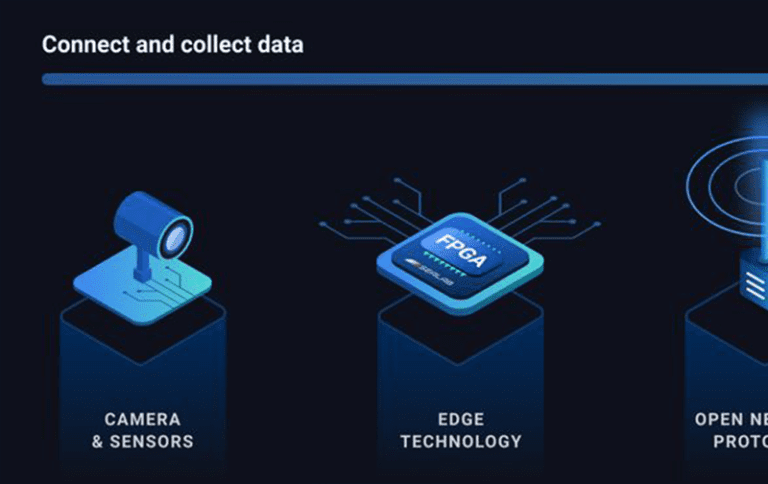

Efficient Deployment of Computer Vision Solutions with Edge Computing

Computer vision solutions are becoming a very important part of our daily life. It has many

Cloud-based Computer Vision: Enabling Scalability and Flexibility

CV APIs are growing in popularity because they let developers build smart apps that read, recognize,

Breaking Myths About Compliance & Licenses For Financial Services

Licensees often fail to report payments accurately in their license agreements. This is sometimes the result

How To Integrate DevOps Into Your Software Development Process

DevOps Integration is a critical element of modern software development and delivery processes. It refers to

The Role of Cloud Computing In Enhancing Customer Experience In Financial Services and Banking

Cloud computing has been a topic of discussion for quite a while now. Cloud computing refers

Challenges Faced By Financial Services While Scaling Application

The financial industry of today has several challenges. Some include security threats, various operating procedures, and

Everything To Keep In Mind While Working On Financial Services Application

FinTech has become ubiquitous, with its presence seen in everyday activities like scanning a QR code

Cloud Computing and Data Analytics in Financial Services and Banking

Introduction: Banks and financial services institutions are powerhouses of data. These organizations handle large volumes of

Benefits of Hybrid Infrastructure for Financial Services

In recent years cloud computing has become an important part of the financial industry. It has

Best Practices For Testing And Security in DevOps, Including Automated Security

DevOps security combines three words: development, operations, and security and its very goal is to remove

Collaboration & Communication Techniques for DevOps Teams | Agile Methodologies and Culture

DevOps teams are responsible for incorporating changes and delivering in a fast-paced and constantly evolving environment.

How To Manage And Monitor Microservices In A DevOps Environment

DevOps Environment is a culture, set of practices, and tools that enable development and operations teams

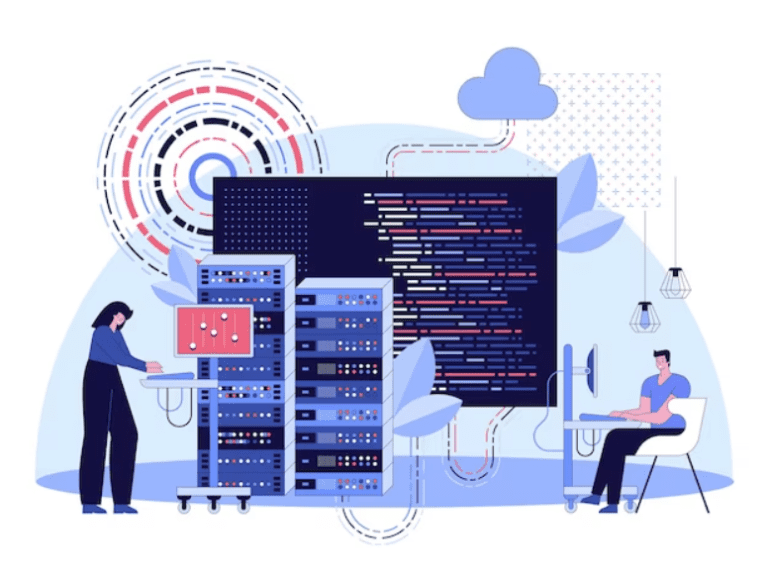

Automating Deployment And Scaling In Cloud Environments Like AWS and GCP

Introduction: Automating the deployment of an application in cloud environments like AWS (Amazon Web Server) and

How To Implement Containerization In Container Orchestration With Docker And Kubernetes

Kubernetes and Docker are important implementations in container orchestration. Kubernetes is an open-source orchestration system that

How to Setup a DevOps pipeline using popular tools like Jenkins, GitHub

Setup a DevOps pipeline using popular tools like Jenkins, GitHub Continuous Integration and Continuous Delivery, or

Understanding Continuous Integration (CI) and Continuous Deployment (CD) in DevOps

In a world full of software innovation, delivering apps effectively and promptly is a major concern

How To Manage Infrastructure As Code Using Tools Like Terraform and CloudFormation

Infrastructure as Code can help your organization manage IT infrastructure needs while also improving consistency and

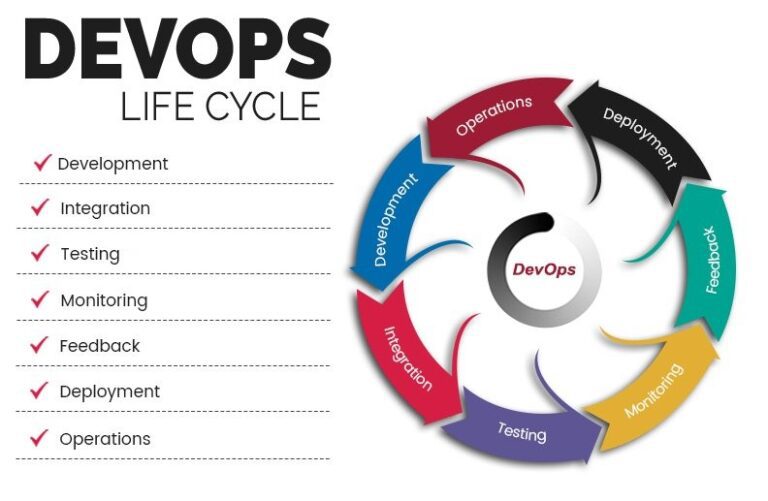

Introduction Of DevOps And Its Benefits For Software Development

When it comes down to it, the essence of DevOps is working culture. It encompasses a

Top 8 Benefits Of Using Cloud Technologies In The Banking Sector

The banking sector is increasingly turning to cloud technology to help them meet the demands of

Impact Cloud Computing Has On Banking And Financial Services

Cloud computing in financial sector provides the opportunity to process large chunks of data without needing

All You Need To Know About Risk Management in Cloud Banking Systems

Risk management is a crucial aspect of cloud-based banking systems to ensure the security and stability

Potential Issues With CI/CD In Finance And How We Can Solve Them

Creating a functional Continuous Integration and Delivery pipeline involves a series of successful events. Still, common

Top 10 Data Security Challenges For Financial Services

Data security is a critical concern for financial services companies. The financial sector handles sensitive information,

5 Significant Challenges Faced By Financial Services While Choosing SaaS Service

Technological modernization makes it easier to carry out various business operations within a second. One can

How Can Financial Enterprises Benefit From Private 5G Network Architecture?

Private 5G is a special framework of 5G designed for big organizations to benefit from high

Is 5G Changing The Future Of Gaming Companies In India?

What is 5g technology? By 5g it means the fifth generation of mobile network technology. It

Best Practices and Case Studies for DevOps in Finance

DevOps in finance is one of the most innovative development practices in financial sector. Moving quickly

FinOPs and All That You Need To Know From DevOps Perspective

FinOps tools help cloud based organizations allocate resources and achieve their business goal effectively. Read the

What is Multi-Cloud Migration for Traditional Businesses?

Multi-cloud migration is the process of moving an organization’s IT resources and workloads from one or

How can BFSI Companies Leverage the Latest Cloud Technology for the Best Customer Experience?

How can BFSI companies leverage the latest cloud technology? What does BFSI stand for? BFSI stands

Types of cloud infrastructure needed for BFSI to have continuous operations.

We have seen a lot more digital transformation globally in recent years. Cloud computing has become

Why do Financial Services use Multi-cloud to solve their Critical Security Loopholes?

When talking about the past two decades, most businesses have opted for a single public cloud

Why multi-cloud is the first choice of financial services to become cloud-native?

As the financial services industry continues to evolve and adapt to new technologies, many organizations are

Digital Transformations in Banking & Ways BFSI can thrive in dynamic technological advancements

Ways BFSI can thrive in dynamic technological advancements The banking, financial services, and insurance (BFSI) sector

Tactics to Manage Your Multi-Cloud Budget

For businesses managing multiple clouds, it can be difficult to optimize their budget to get the

Benefits of 5G For Business in App Development

Introduction 5G in app development will foster an era not only of high-speed internet networks but

Top 5 strategies for Cloud Migration in a Multi-cloud Architecture

The global trend in a post-pandemic world shows that businesses are moving towards to digital environment.

6 reasons why Starts-ups should adapt to multi-region application architecture

What is multi-region application architecture? Multi-region application architecture ensures we add a secondary region or more

5 examples to understand Multi-cloud and its future

Introduction The nature of technology reflects a gradual shift towards leaner, affordable and resilient innovation. The

Advantages and Drawbacks of migrating to Multi-cloud Infrastructure

Introduction The multi-cloud management is an innovative solution to increase business effectiveness. Because of the custom-made

Latest Multi-Cloud Market Trends In 2022-2023

Why is there a need for Cloud Computing? Cloud computing is getting famous as an

Top 5 Things You Should Look For In a Games Deployment Company

What should you look for in a Games Deployment Company? Let us talk about 5 Things

Latest games scheduled to release this year-end

There are a plethora of exciting forthcoming games to look forward to, ranging from brand-new series

Trends you need to know about Gaming Technology in 2023

Let us get to know about the latest gaming technology that will be trending in 2023.

The intersection of gaming and the Metaverse | Cloud Gaming Servers

The intersection of gaming and metaverse needs cloud gaming servers. Lets understand more about it. If

Edge Computing Market trends in Asia

Edge Computing is booming all around the globe, so let us look in to what the

Generate 95% more profits every month by easy Cloud deployment on Nife

Cloud use is increasing, and enterprises are increasingly implementing easy cloud deployment tactics to cut IT

Future of smart cities in Singapore and India

This blog will explain the scope and future of Smart Cities in Singapore and India. The

All you need to know about the Marvel’s latest standalone Iron-man game

Marvel Entertainment and Motive Studio have joined forces to create an all-new Marvel’s latest standalone Iron

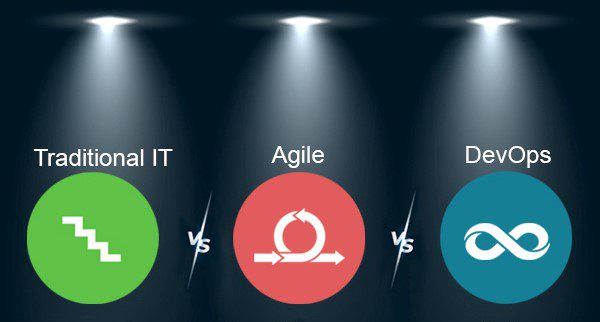

DevOps vs. Agile vs. Traditional IT! Which is better?

Lets understand how DevOps vs. Agile vs. Traditional IT works! DevOps vs Agile is a topic

7 Proven methods to address the Dev and Ops challenges

In today’s article, we’ll go over 7 Proven Methods for Addressing DevOps Challenges. Dev and

Top Cloud servers to opt for to skyrocket your gaming experience

The cloud hosting industry’s growth pace continues to accelerate, with new developments becoming more interesting and

DevOps vs DevSecOps: Everything you need to know!

Which is preferable: DevSecOps or DevOps? While the two may appear quite similar, fundamental differences will

Quick Guide: Why are Cloud servers better for gaming?

Cloud gaming services allow you to play your favourite games on any device that has an internet connection and a display.

Build, innovate and scale your Apps – Nife will take care of the rest!

Are you in search of a Hybrid Cloud Platform, which can help you build, deploy, and

Amazing DevOps hacks to try right now | Nife

The tools, methods, and culture connected with DevOps have significantly evolved over the years, allowing this extremely valuable niche of professionals to be directed and supported by the correct attitude and, of course, technology.

8 reasons why modern businesses should adapt to DevOps

Implementing DevOps principles increases software quality while releasing new features and helps you to make changes quickly.

Top 10 communities for DevOps to join

Starting as a newbie in the DevOps sector can be difficult and daunting as you try to find platforms based on DevOps from which you can learn and successful DevOps programmers are continuously reading, learning, and deploying new concepts

Optimize Ops resources by collaborating with an extended DevOps platform

A DevOps platform integrates the capabilities of developing, securing, and running software in a single application.

Should you optimize your Docker container?

To list docker containers, use the commands ‘docker container ls’ or ‘docker ps’. Both commands use the same flags since they both act on the same item, a container. It includes many parameters to achieve the result we want because it only shows operating containers by default.

Adapt to the latest technologies to deliver a world-class customer experience

Cloud computing technologies are a means to many goals that each organisation must identify as part of a unified cloud strategy. There are several ways that Cloud computing technology may have a real-world influence across industries for companies aiming to change the customer experience.

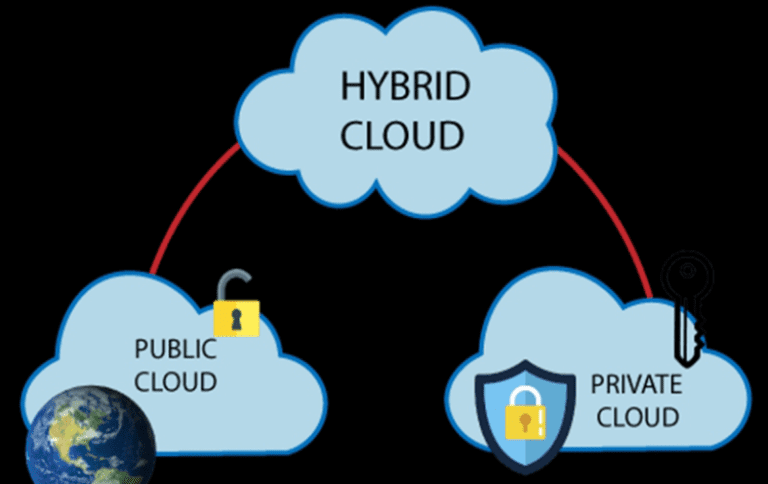

Cloud Deployment Models and it’s types

A cloud deployment model denotes a specific cloud environment depending on who controls security, who has access to resources, and whether they are shared or dedicated. The cloud deployment model explains how your cloud architecture will appear, how much you may adjust, and whether or not you will receive services.

How does cloud computing affect budget predictability for CIOs?

CIOs will need to remain up to date on the newest innovations to make the best decisions on behalf of their businesses to drive their digital transformation. Because of the cloud’s influence, as well as the DevOps movement, software development and IT operations have been merged and simplified

Five Essential Characteristics of Hybrid Cloud Computing?

A hybrid cloud environment combines on-premises infrastructure, private cloud services, and a public cloud, the hybrid cloud providers such as Amazon Web Services (AWS) or Microsoft Azure, with orchestration across multiple platforms.

What is 5G Telco Edge? Telco Edge Computing

Telco edge computing, on the other hand, comprises workloads operating on client-premises equipment and other points of presence at the customer site.

What to look out for when evaluating potential cloud providers?

There are many cloud providers in Singapore such as NIFE which developer-friendly serverless platform designed to let businesses quickly manage, deploy and scale applications globally.

The Advantages of Cloud Development : Cloud Native Development

Cloud development is the process of creating, testing, delivering, and operating software services on the cloud. Cloud software refers to programmes developed in a cloud environment.

DevOps as a Service : All You Need To Know!

Many mobile app development organisations across the world have adopted the DevOps as a service mindset. It is a culture that every software development company should follow since it speeds up and eliminates risk in software development

What is the Principle of DevOps?

DevOps is a software development culture that integrates development, operations, and quality assurance into a continuous set of tasks (Leite et al., 2020). It is a logical extension of the Agile technique, facilitating cross-functional communication, end-to-end responsibility, and cooperation.

Simplify your deployment process | Cheap Cloud Alternative

Multi-access Edge Computing provides cloud computing capabilities and an IT service environment at the network’s edge to application developers and content suppliers.

Cloud Deployment Models and Cloud Computing Platforms

Cloud computing is a network access model that enables ubiquitous, convenient, on-demand network access to a shared pool of configurable computing resources (e.g., networks, servers, storage, applications, and services) that can be rapidly provisioned and released with minimal management effort or interaction from service providers

Hybrid Cloud Deployment and it’s Advantages

You may increase your cloud computing capacity without raising your data centre costs by adding a public cloud provider to your existing on-premises architecture.

What is Edge to Cloud? | Cloud Computing Technology

The increased requirement for real-time data-driven decision-making, particularly by Edge Computing for Enterprises, is one driver of today’s edge-to-cloud strategy.

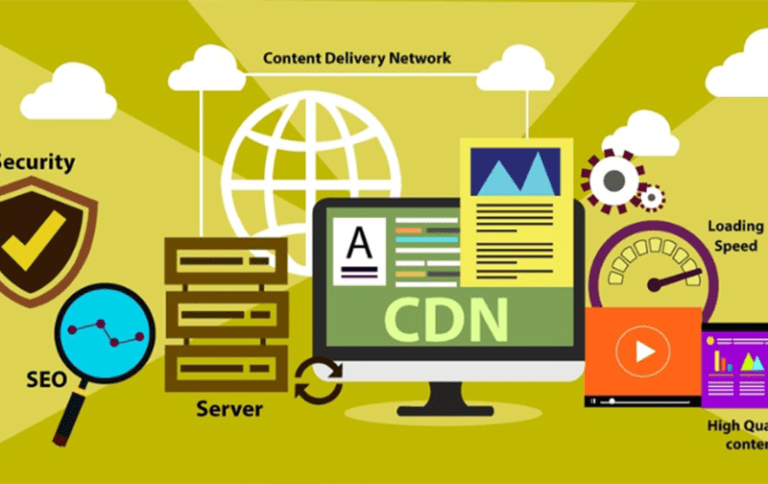

Content Delivery Networking | Best Cloud Computing Companies

Content Delivery Networks, or CDNs, have grown in popularity. Content Delivery Networking global servers that enable consumers to get material with minimal delay.

Save Cloud Budget with NIFE | Edge Computing Platform

NIFE is a Singapore-based Unified Public Cloud Edge best cloud computing platform for securely managing, deploying, and scaling any application globally using Auto Deployment from Git.

Real-time Application Monitoring

NIFE is a serverless platform for developers that allows enterprises to efficiently manage, launch, and scale applications internationally.

Cloud Cost Management | Use Nife to save Cloud Budget

Apply the best practices for cloud cost management given below to create a cloud cost optimization plan that relates expenses to particular business

Cloud Computing Platforms | Free Cloud Server

Nife is a Unified Public Cloud Edge Platform and requires no DevOps, servers, or cloud infrastructure services management.

Network Slicing & Hybrid Cloud Computing

5G facilitates the development of new business models in all industries. Even now, network slicing will play a critical role in enabling service providers can offer innovative products and services to access new markets and develop their companies.

Transformation of Edge | Cloud Computing Companies

Organizations recognize the need to modify their processing practices and are adopting Edge Computing to speed their Digitalization activities

Interconnection Oriented Architecture | Edge Network

IOA is capable of creating a value approach that fits your company’s demands and therefore can respond more swiftly in the future by leveraging most of your current communications infrastructure.

Content Delivery Networking | Digital Ecosystems

Digital Ecosystem Management (DEM) is a new business field that has arisen in reaction to digitalization and digital ecosystem connectivity.

Enhancing user experience and facilitating innovation with Edge Compute

Edge computing is the process of operating programs at the network’s edge instead of on centralised equipment in a data centre or the cloud .

Develop Digital First Culture | Edge Computing Applications

Developing a digital-first culture entails greater than just using cutting-edge technologies.

What are Cloud Computing Services [IaaS, CaaS, PaaS, FaaS, SaaS]

These cloud computing services offer on-demand computing capabilities to meet the demands of consumers. They provide options by keeping IT infrastructure open, from data to apps. The field of cloud-based services is wide, with several models.

Why Hybrid Cloud? An overview of the top benefits of hybrid

The mix of private and public cloud platforms that allows applications to migrate between both the two interrelated domains is the cornerstone of a hybrid cloud paradigm (Aktas, 2018). This portability across cloud services allows enterprises to be more flexible and agile in their information configurations.

Container as a Service (CaaS)-A Cloud Service Model

A solitary container may host everything from little services or programming activities to a huge app. All compiled code, binary data, frameworks, and application settings are contained within a container.

Artificial Intelligence at Edge : Implementing AI the unexpected destination of the AI journey

This is when things start to get interesting. However, a few extreme situations, such as Netflix, Spotify, and Amazon, are insufficient. Not only is it difficult to learn from extreme situations, but when AI becomes more widespread, we will be able to find best practices by looking at a wider range of enterprises.

Gaming Industry’s Globalisation | Best Edge Platform

The video game industry’s globalisation and technical requirements are also expanding, with more powerful computer game visual effects demanding super strength Processing capacity, increased displays, amazing adapters, and low latency networks.

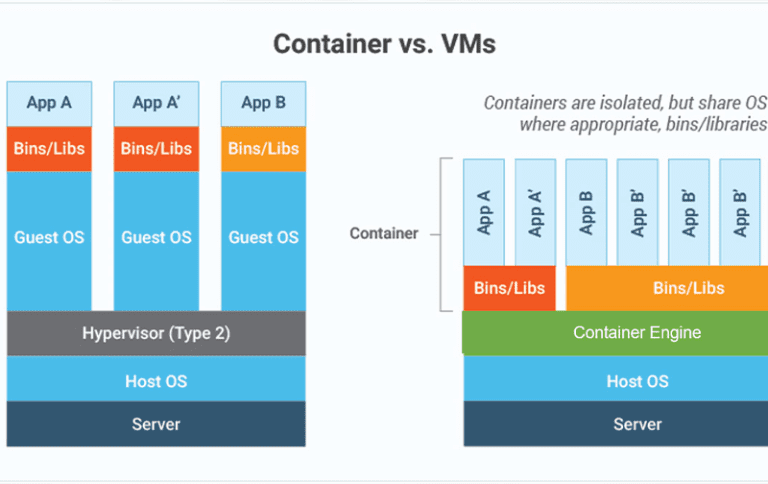

Containers or Virtual machines? Get the most of our Edge Computing tasks

Consider how aggravating it is to enjoy a multiplayer game and have the frame rate decrease, or to stream a video and have the visual or sound connection delay. Edge computing is useful whenever speed is important or produced data has to be kept near to the consumers.

How Can 5G Connections Deliver 100times Faster and Monetize

In this age of the internet, customers seek faster, stronger, better accessible, and innovative data rates. Most users want to view videos on their phones as well as download files and operate a variety of IoT devices. They expect a 5G connection to deliver 100 times faster speeds, ten times greater capacity, and ten times lower latency.

Smart Stadiums: The world and the world it can be!

It’s difficult to imagine a market wherein live broadcast stream isn’t an essential component, thanks to the entertainment and media businesses, which have been supported by an ever-increasing amount of lateral use scenarios.

How and Why of Edge and AR | Edge Computing Platform

AR (augmented reality) and VR (virtual reality) are still considered specialized innovations which have yet to be widely accepted. A lot of it comes down to the issues that edge computing can now solve. Following the commercial release of 5G, AR (augmented reality) and VR (virtual reality) encompasses a slew of innovative application cases that, when combined by the edge of the network, will provide significant value to the sector and businesses.

Computing versus Flying Drones | Edge Technology

Drone computing may considerably reduce work latency and communication power usage by making use of the line-of-sight qualities of air-ground linkages. Drone computing, for example, can be useful in disaster zones, emergencies, and conflicts when grounded equipment is scarce

5G Technology shaping the experience of sports audiences

Sports Fans want an experience enhanced by their portable equipment in this era of online and mobile usage. As consumers grow more intelligent and seek a much more interactive, inventive, and entertaining experience, the number of virtual events is expanding, pushing the envelope again for the style and durability of the event.

5G technology | Cloud Computing Companies

Real 5G, which will support anything from utility and industrial grids to automated cars and retail customers, has the potential to completely overrun the network’s edges.

Playing Edgy Games with Cloud, Edge | Cloud Computing Services

Edge technology is a modern industry that has sprung up as a result of the shift of processing resources from the cloud to the edge.

AI-driven Businesses | AI Edge Computing Platform

By evaluating and exploiting excellent data, AI can solve problems and boost business efficiency regardless of the size of a company

AI and ML | Edge Computing Platform for Anomalies Detection

There is a common debate on how Edge Computing Platforms for Anomalies Detection can be used.

Artificial Intelligence – AI in the Workforce

Using Machine learning and artificial intelligence for capacity management is critical for making intelligent network financial decisions that optimise total cost of ownership (TCO) while offering the highest return in terms of service quality.

5G Network Area | Network Slicing | Cloud Computing

The concept of network slicing is incomplete without the cooperation of communication service providers. It assures that the 5G Radio Access Networks (RAN) Slicing-enabled services are both dependable and effective.

Machine Learning-Based Techniques for Future Communication Designs

Machine-learning-based technologies for observation and administration are especially suitable for sophisticated network infrastructure operations.

Location Based Network Simulators | Network Optimization

Network optimization is a combination of techniques and practices aimed at improving a network’s general health. It manages all components, from the client to the servers, as well as their operations and interconnections.

5G in Healthcare Technology | Nife Cloud Computing Platform

5G has the potential to revolutionize healthcare as we know it. As we saw during the last epidemic, the healthcare business needs tools that can serve people from all socioeconomic backgrounds.

5G Monetization | Multi Access Edge Computing

“Harnessing the 5G consumer potential” and “5G and the Enterprise Opportunity” are two studies that go through the various market prospects.

Edge VMs And Edge Containers | Edge Computing Platform

Edge computing is a collection of localized mini data centres that relieve the cloud of some of its responsibilities, acting as a form of “regional office” for local computing chores rather than transmitting them to a central data centre thousands of miles away.

Edge Gaming The Future

The emergence of cloud gaming services is one of the most exciting advances in cloud computing technology in recent years.

Nife Edgeology | Latest Updates about Nife | Edge Computing Platform

Nife is a Unified Public Cloud Edge Platform to Manage, Deploy and Scale any application securely globally with Auto Deploy from Git. Zero DevOps, Servers, or Infrastructure Management.

About Nife – Contextual Ads at Edge

To get this perfect mix of customer experience and media monetization, advertisers will need a technology framework that harnesses various aspects of 5G, such as small cells and network slicing, to deliver relevant content in real-time with zero latency and lag-free advertising

Differentiation between Edge Computing and Cloud Computing | A Study

Are you familiar with the differences between edge computing and cloud computing?

Is edge computing a type of branding for a cloud computing resource, or is it something new altogether? Let us find out!

Condition-Based Monitoring at Edge – An Asset to Equipment Manufacturers

Large scale manufacturing units, especially industrial setups have complicated equipment. The equipment is expensive, any device failure can lead to cost to the company. Can this cost be reduced?

Learn More!

Computer Vision at Edge and Scale Story

Computer Vision forms a significant chunk with the new age of surveillance. Surveillance Cameras can be dumb or intelligent, but Intelligent cameras are expensive. Every country has some laws associated with Video Surveillance.

How do Video Analytics companies serve their customers rightfully, with the demand being high?

Nife helps with this.

Case Study 2: Scaling deployment of Robotics

Robots have brought a massive change in the present era, and so we expect them to change the next generation. While it may not be true that the next generation of robotics will do all human work but the robotic solution help with automation and productivity improvements. Learn More!

Case Study: Scaling up deployment of AR Mirrors

Smart mirrors, the future of mirrors, are known to be the world’s most advanced Digital Mirrors. Augmented Reality mirrors are a reality today, and they hold certain advantages amidst COVID-19 as well.

Learn More about how to deploy and scale Smart Mirrors.

How Pandemic is shaping 5G networks innovation and rollout ?

What’s happening with 5G and the 5G rollout? How are these shaping innovation and the world we know? Are you curious? Read More!

Videos at Edge | Unilateral Choice

Why are videos the best to use with Edge? What makes edge special for Videos? This article will cover aspects of Video at Edge why it is a Unilateral choice! Read on!

Intelligent Edge | Edge Computing in 5G Era

Edge Cloud computing refers to a process through which the gap between computing and network vanishes. We can provide computing at different network locations through storage and compute resources.